This is a multipart series based on some lab test & work I did.

- Part 1 Upgrading Hyper-V Cluster Nodes to Windows Server 2012 (Beta) – Part 1

- Part 2 Upgrading Hyper-V Cluster Nodes to Windows Server 2012 (Beta) – Part 2

- Part 3 Upgrading Hyper-V Cluster Nodes to Windows Server 2012 (Beta) – Part 3

And we have arrived at part three of my adventures while “transitioning” my Hyper-V cluster nodes to Windows Server 2012. I prefer the term transition as is more correct. We can still not do a rolling upgrade a cluster cluster. We still need to create a new cluster and recuperate the evicted nodes.

I’ll repeat myself here (again) by stating I did not reinstall the evicted nodes but upgraded them. Why, because I can and I wanted to try it out and see what happens. For production purposes I do advise you to rebuild nodes from scratch using a well defined and automated plan if possible. I already mentioned this in Upgrading Hyper-V Cluster Nodes to Windows Server 2012 (Beta) – Part 1

Moving the Storage & Hyper-V Guests

So we stopped Part 2 at a newly created cluster without any storage. That’s what we’ll be taking care of in this part. Let’s recap what we already mentioned at the end of Part 2.

We have several options for storage here. We could assign new storage but we cannot do a Quick Storage Migration between cluster using SCVMM2008R2 but that doesn’t fly as SCVMM2008R2 can’t manage Windows 2012 clusters and I don’t know if it ever will. We can do a good old manual or scripted export to and import from the new storage of the VMs what takes a considerable amount of time. You also need to have the extra storage available.

We can also recuperate the old storage with the VMs still on there. This could get tricky as no two cluster should be able to see & use the storage at the same time. The benefit could be that we can just use the import type in Windows Server 2012 “Register the virtual machine in-place” (use the existing unique ID) and be done with it. We’ll try that one. We’ll still have some down time but it should be pretty fast. It’s only from Windows Server 2012 on that we’ll be able to do Shared Nothing Live Migrations between clusters ![]() and live will be good. If you have a SAN you could also use clones to get this job done without less risk. You work on cloned data and keep the original around instead of using that for the process described below.

and live will be good. If you have a SAN you could also use clones to get this job done without less risk. You work on cloned data and keep the original around instead of using that for the process described below.

So how do we approach this?

Since Windows Server 2008 storage & clustering isn’t the pain it could be in earlier version. It’s the disk manager handling all that and it makes live a lot easier. All disks presented to a cluster node are off line to the operating system until you bring it online. Even if it contains data or is presented to another host, whether that is a member of another cluster or a stand alone host. Pretty cool. It also means you can have all your nodes on line during the process. The process of bringing the disk online and, if needed formatting it with NTFS and then adding it to the cluster as storage can be done on just one of the nodes.

As you recall I unplugged the evicted node from the iSCSI storage (you could also disable the ports) before I upgraded it. The entire iSCSI configuration got upgraded perfectly so all I needed to do was plug the iSCSI cables in and the storage appeared offline. My old cluster node was up and running still accessing it. Pretty slick! And great as a demo but you can play it safer. That was fun ![]() but perhaps we won’t be that brave in a production environment.

but perhaps we won’t be that brave in a production environment.

Options

You could decide to bring all LUNS over at once or one at the time. The process is the same. If you do it one by one you’ll have to rely on the above behavior to protect the LUNs against corruption or you can un-present the LUNS remaining on the old cluster from the new cluster so you’ll never have an issue. We’ve done both and it works out rather fine in testing. Windows clustering is really doing it’s best to prevent you from shooting yourself in the foot ![]()

Let’s say I go LUN by LUN. Now I can just remove the VMs from the old cluster using the Failover cluster GUI so they are no longer highly available on that node. When I have no more clustered VMs on a CSV LUN I can shut down all the guests in Hyper-V Manager and stop right there.

On the old cluster I remove that LUN from the CSV storage and from the cluster storage. At that moment that LUN is already taken offline for you!

Pardon the silly size but I didn’t have space left to make a realistic screenshot ![]()

Great, Windows is protecting us against any possible data corruption! So now I can than un-present the LUN form the old cluster nodes. The next step is to enable the ISCI ports, present that LUN to the new cluster node or nodes (depends on where in the x number of node process you are) or just plug in the cable .

You’ll see the new LUN off line than on the new cluster. We can than make the LUN on line so it will be available to add to the cluster. Just right click that disk and select “Online”.

Right click on storage

Select an disk that’s available to add to the cluster.

Things has gotten a lot simpler with CSV in Windows Server 2012. No more enabling it with a funky warning message that’s well meant but is rather confusing an annoying. You just right click the disk and choose “Add to Cluster Shared Volumes” and that’s it.

And there it is. That disk in our new cluster is ready to use as a CSV.

So we can now us a nifty new capability in Windows Server 2012 Hyper-V: “Register the virtual machine in-place” (use the existing unique ID)

The wizard starts.

Select the folder where your VM or VMs live. yes you can do multiple given that your folder structure allows for this.

It’s found one VM in our folder

We click Next

We select “Register the virtual machine in-place” (use the existing unique ID) and click next.

If something is not right like some forgotten “saved” states you’ll get a change to dump those or cancel the process to deal with it properly before trying it again.

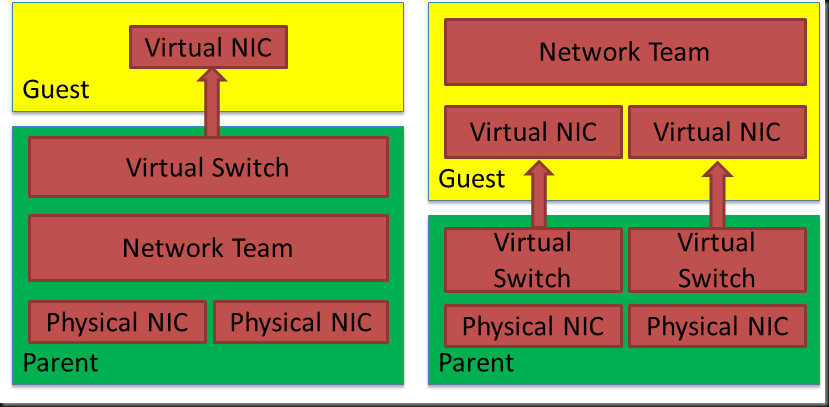

If virtual network names do not match you’ll get the opportunity to set correct that by specifying what virtual switch to use.

If all was well in the first place or after you’ve fixed any issues like the ones demonstrated above you’re good to go. Click finish and enjoy your Windows Server 2012 Hyper-V Guest.

At this point you can already start your VMs. I know that the next step is to make all these VMs highly available but here we have some good news as well. You can now make running VMs highly available. Yeah! They no longer need to be shut down. All this is done via the well know process so I’m not going to walk trough the entire process here. But the screen shot of a making a running VM highly available is worth posting ![]()