VMQ or VMDq

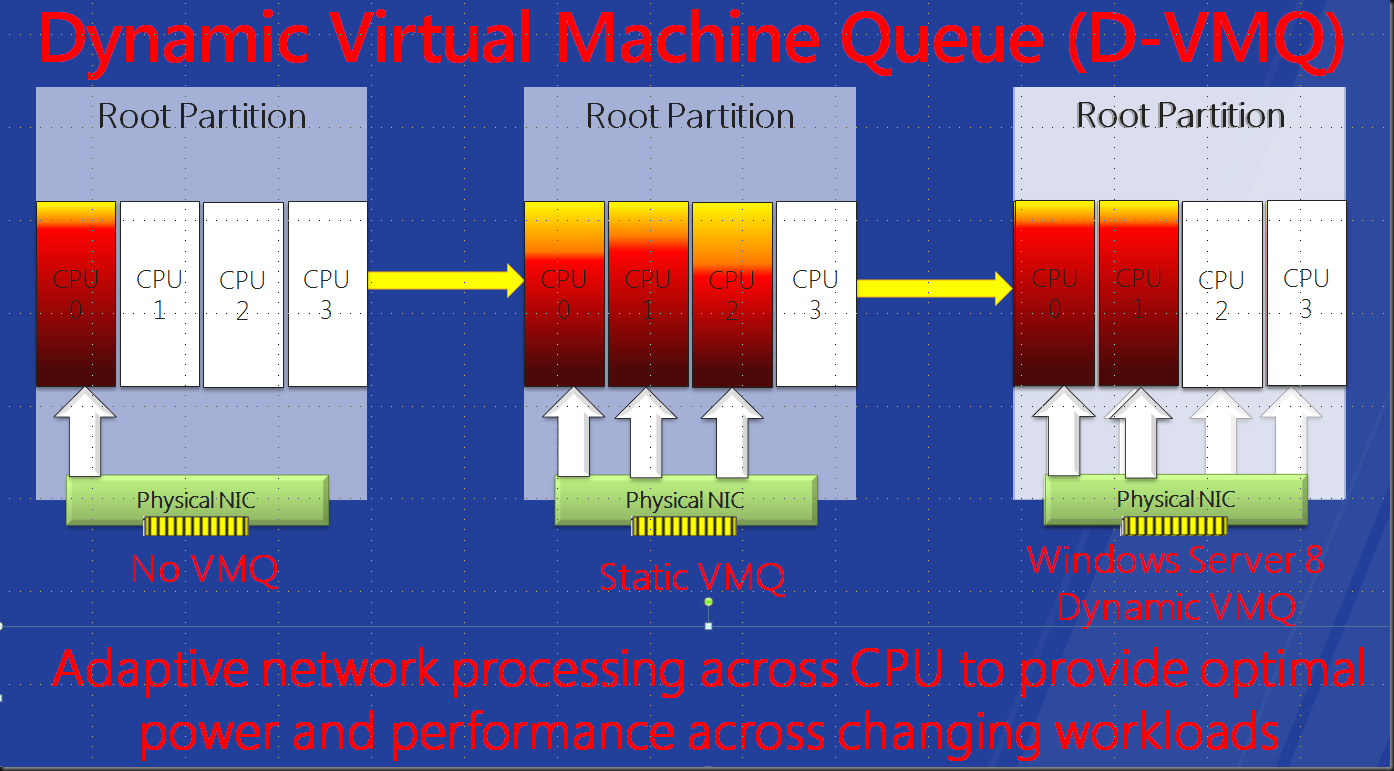

To discuss Dynamic VMQ (DVMQ) we first need to talk about VMQ or VMDq in Intel speak. VMQ lets the physical NIC create unique virtual network queues for each virtual machine (VM) on the host. These are used to pass network packets directly from the hypervisor to the VM. This reduces a lot of overhead CPU core overhead on the host associated with network traffic as it spreads the load over multiple cores. The same sort of CPU overhead you might see with 10Gbps networking on a server that isn’t using RSS (see my previous blog post Know What Receive Side Scaling (RSS) Is For Better Decisions With Windows 8. Under high network traffic one core will hit 100% while the others are idle. This means you‘ll never get more than 3Gbps to 4Gbps of bandwidth out of your 10Gbps card as the CPU is the bottleneck.

VMQ leverages the NIC hardware for sorting, routing & packet filtering of the network packets from an external virtual machine network directly to virtual machines and enables you to use 8gbps or more of your 10Gbps bandwidth.

Now the number of queues isn’t unlimited and are allocated to virtual machines on a first-come, first-served basis. So don’t enable this for machines without heavy network traffic, you’ll just waste queues. It is advised to use it only on those virtual machines with heavy inbound traffic because VMQ is most effective at improving receive-side performance. So use your queues where they make a difference.

If you want to see what VMQ is all about take a look at this video by Intel.

The video gives you a nice animated explanation of the benefits. You can think of it as providing the same benefits to the host as RSS does. VMQ also prevents one core being overloaded with interrupts due to high network IO and as such becoming the bottle neck blocking performance. This is important as you might end up buying more servers to handle certain loads due to this. Sure with 1Gbps networking the modern CPUs can handle a very big load but with 10Gbps becoming ever more common this issue is a lot more acute again than it used to be. That’s why you see RSS being enabled by default in Windows 2008 R2.

VMQ Coalescing – The Good & The Bad

There is a little dark side to VMQ. You’ve successfully relieved the bottleneck on the host for network filtering and sorting but you know have a potential bottle neck where you need a CPU interrupt for every queue. The documentation states as follows:

The network adapter delivers interrupts to the Management Operating system for each VMQ on the processor based processor VMQ affinity. If the interrupts are spread across many processors, the number of interrupts delivered can grow substantially, until the overhead of interrupt handling can outweigh the benefit of using VMQ. To reduce the number of interrupts used, Microsoft has encouraged network adapter manufacturers to design for interrupt coalescing, also called shared interrupts. Using shared interrupts, the network adapter processes multiple queues with the same processor affinity in the same interrupt. This reduces the overall number of interrupts. At the time of this publication, all network adapters that support VMQ also support interrupt coalescing.

Now coalescing works but the configuration and the possible headaches it can give you are material for another blog post. It can be very tedious and you have to manage every action on your NIC and Virtual Switch configuration like a hawk or you’ll get registry values overwritten, value types changes and such. This leads to all kind of issues, ranging from VMQ coalescing not working to your cluster going down the drain (worse case). The management of VMQ coalescing seems rather tedious and a such error prone. This is not good. Combine that with the sometime problematic NIC teaming and you get a lot of possible and confusing permutations where things can go wrong. Use it when you can handle the complexity or it might bite you.

Dynamic VMQ For A Better Future

Now bring on Dynamic VMQ (DVMQ). All I know about this is from the Build sessions and I’ll revisit this once I get to test it for real with the beta or release candidate. I really hope this is better documented and doesn’t’ come associated with the issues we’ve had with VMQ Coalescing. It brings the promise of easy and trouble free VMQ where the load is evenly balanced among the cores and avoids to the burden of to many interrupts. A sort of auto scaling if you like that optimizes queue handling & interrupts.

That means it can replace VMQ Coalescing and DVMQ will deal with this potential bottleneck on its own. Due to the issues I’ve had with coalescing I’m looking forward to that one. Take note that you should be able to live migrate virtual machines from host with VMQ capabilities to a host that hasn’t. You do lose the performance benefit but I have no confirmation on this yet and as mentioned I’m waiting for the Beta bits to try it out. It’s like Live Migration between an SR-IOV enabled host and non SR-IOV enabled host, which is confirmed as possible. On that front Microsoft seems to be doing a real good job, better than the competition.